S02E08: Your Company Has Two Clocks

context graphs · event clocks · the memory layer · AI as operating model

Part 2 of the AI Operating Model series. Part 1: The 100x Employee and the AI-Native Organization.

The story below is AI fan fiction, but the companies that understand the patterns discussed will win the next decade.

In the spring of 2025, greenhouse producer Vermeulen Serres lost Kees-Jan, owner of Groentenenfruit.nl and a customer since fifteen years.

In retrospect, the churn was avoidable. In 2024, when the wholesale tomato market took a downturn, Kees-Jan switched one of his large greenhouses to strawberries. The crop change went into Dynamics in fifteen seconds. What didn’t go anywhere was the implication. His heating system had been specced for tomatoes back in 2010, the crop change invalidated those calculations, and the only person who would have spotted it was Luc, the CTO of Vermeulen Serres. Six months after the switch, Kees-Jan logged a heating modification request. A junior employee answered that the existing system was within spec, and closed the ticket. The following February, under-temperature alarms set off during winter, two thirds of Kees-Jan’s strawberry crop failed, and he moved his account to a competitor.

Vermeulen Serres is a Flemish family business. Around 75 employees, 20 million in turnover, building greenhouse complexes for industrial growers. The ERP runs on Postgres. The CRM is a Microsoft Dynamics install where data goes to decay. Accounting was forklifted to a Peppol-compliant tool when the ERP couldn’t be updated in time for the e-invoicing requirement from the EU. Sales operations runs a parallel Excel pipeline “just for our team.” This is the most common kind of mid-market company in Europe and North America, and almost nothing in the public AI discourse is written for them.

The CEO was sure Luc would have caught Kees-Jan’s issue had he seen the project. Thirty years at the company, Luc’s title says technology, but his actual specialty is greenhouse engineering. Luc is the type of colleague you can randomly approach in the coffee corner about why the glassware spec was changed on the 2018 Verbruggen project and he will give you a precise answer from memory.

IT became part of his mandate because nobody else picked it up. The Kees-Jan incident was the first time the CEO identified the risk, but there would be others. Luc couldn’t be on every project. And Luc is headed for retirement in eighteen months.

The two clocks

Every system in your organization has two clocks. The state clock captures what’s true right now. It tells you who the customer is, whether a contract is active, what’s been delivered in a project, what the maintenance plan says etc… The event clock, if you were to build one, records not only what happened, but also in what order, and why. It answers questions like: which negotiations preceded the contract, which exceptions were granted, which decisions hold up and which can be forgotten. Most companies have decades of state-clock infrastructure. I don’t know any with an event clock.

The “context graph debate” that has dominated tech writing since January is really a debate about how to build the event clock. The losers will be the ones who treated “context graphs” the way they tackled “Agile” in 2008: a container term that meant something at first, then meant less, then meant whatever the speaker wanted it to mean that day. Two terms get conflated and worth pinning down. A knowledge graph captures the state layer: entities and their relationships. A context graph adds the event layer: causes and sequences. I’ll use “context graph” to mean the combined thing. The event clock is the floor. The ceiling, as we’ll see, is the company’s self-model.

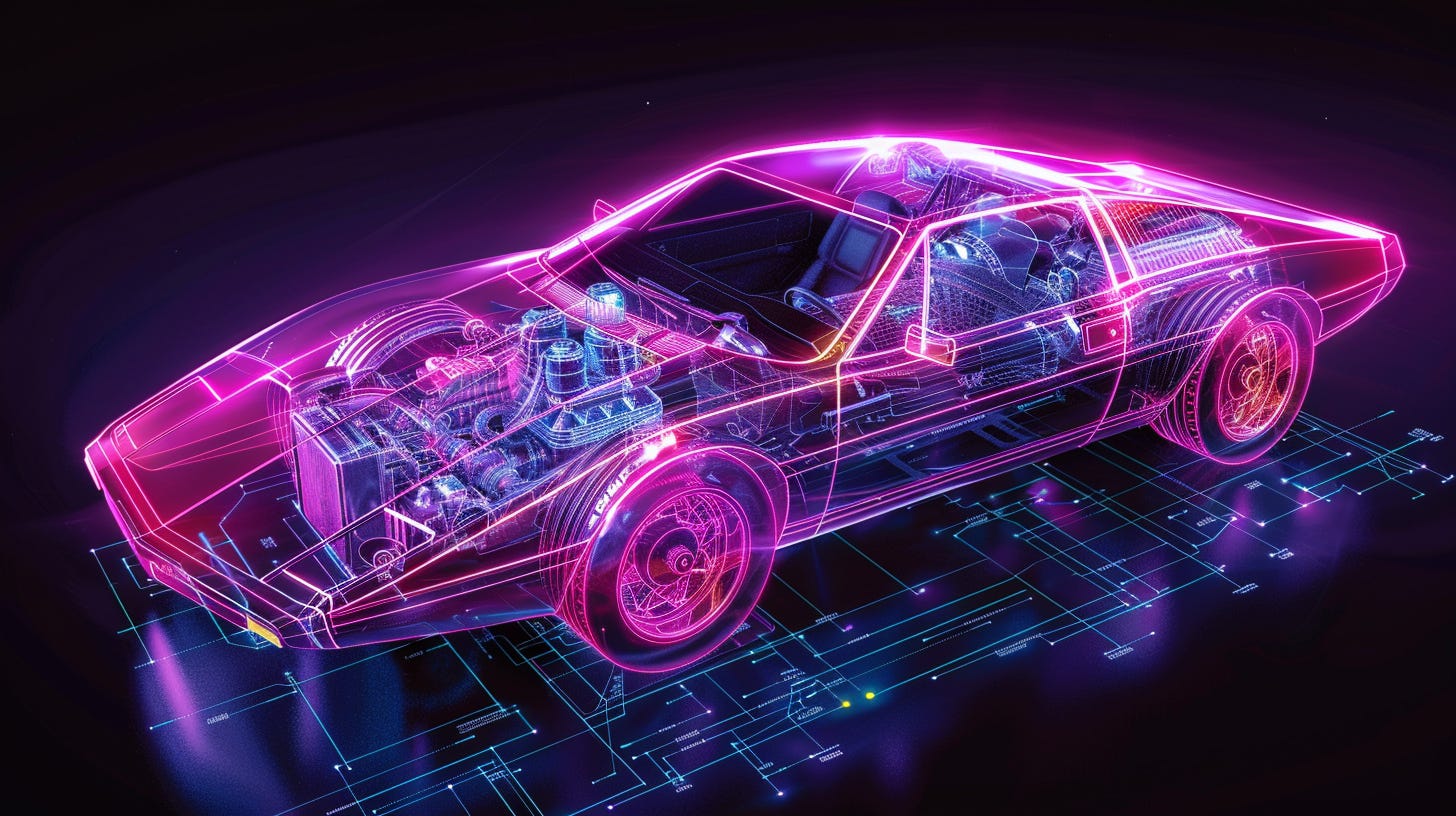

The engine and the car

So, does AI unlock context graphs?

Well; not in and of itself because models are probabilistic. They’re sophisticated stochastic parrots that approximate the right answer most of the time. To run a business on top of one, you have to wire the AI magic into deterministic software that knows when to defer to the model and when to use (or write) plain software. Garry Tan calls these the latent and deterministic sides of the AI coin. Latent is where the model judges under uncertainty: “is this email sales-relevant?” Deterministic is where code does what code does: “subtract January revenue from February.” The art is using each in its lane.

“This is the loop that makes the whole architecture work: the latent space builds the deterministic tool, then the deterministic tool constrains the latent space.” - Garry Tan, CEO of YC

Tan’s frame is best understood with a metaphor: the AI model - the latent space - is just the engine. Latent and deterministic together are the car. The strategic assumption you can safely hold for the next decade is that the model advantage will decay faster than the organizational advantage around it. Put more simply: the engine is becoming a commodity.

Frontier models are converging across providers; some are already available locally as open source. Whatever moat your organization wants is going to live somewhere in the car. If this sounds like technical plumbing, I hate to break the news to you, but the plumbing is where the operating leverage will live.

Building the event clock

The eighteen-month clock starts. Vermeulen Serres has spent fifteen years postponing decisions about its IT systems; Luc’s retirement and the Kees-Jan incident remove the last political reason to keep deferring. The question is whether the company can encode enough of what Luc knows before he leaves, and whether it can spot the next Kees-Jan before he has the conversation.

The back office

Luc brings in an AI transformation engineer for the eighteen-month window. Her first call is the data classification: the company treats its data as proprietary but not regulated, which makes public clouds an option and unlocks half the tool stack (regulated industries face different choices).

The first build gives an agent supervised access to the company stack: the ERP, Dynamics, the project archive, supplier specs, the financials, the support inbox. Everything is read-only by default; writes go through scoped service accounts with audit logs. The agent answers questions like “which Q2 projects are running over budget on materials, and what changed?” without anyone in finance having to open a spreadsheet.

Letting systems talk to each other sounds not only difficult, but also boring. At least until you realize AI legibility will be the single biggest business advantage of the next decade. Despite all these IT systems, most companies are not legible to machines. Most companies are barely legible to their own people. Bear with me and assume Vermeulen Serres achieves the legibility milestone - we’ll come back to the challenges later.

Now that AI agents can read their systems of state, Vermeulen’s CEO wants to build the event clock: a system that would have caught Kees-Jan’s crop switch the moment it landed in Dynamics in 2024, instead of letting it surface eighteen months later as a churned account.

The logic sounds straightforward. A crop switch on a fifteen-year-old greenhouse should trigger a re-evaluation of the heating spec. Five other customers made a similar switch in the same window; an event-clock-aware agent would have flagged all five for engineering review before the cold snap hit.

The architecture the engineer chooses is a vector-graph hybrid. The vector layer (Postgres + pgvector, since they already run Postgres for the ERP) handles fuzzy retrieval like “find anything that mentions delivery delays at Kees-Jan’s site.” The graph layer (Graphiti) holds the explicit relationships: this client switched crops in this month for this reason, this support ticket was opened against this delivery, this engineer wrote the heating spec on this project. Bi-temporal queries become possible. “What did the support team know about Kees-Jan’s heating on the day his ticket was closed?” Now try that with SQL.

The temporal aspect is new: every fact carries two timestamps, one for when it became true in the world and one for when the company learned about it. The team can replay the graph as it stood on the day Kees-Jan logged his heating modification request and audit what the agent should have known then. The event clock is a record of what was knowable when. This turns the graph from a database into an audit trail, and gives the company the substrate of a system that updates its self-model based on what it observes. Cybernetics, but with 2026 tools.

The meeting record

The vector-graph hybrid only works if the events get captured. The state systems (ERP, Dynamics, project archive) capture transactions and documents, not conversations. Most of what Luc knows lives in conversations, like the call with Kees-Jan where he explained why he switched crops.

The current generation of meeting tools (Granola, Fireflies, Teams’ built-in transcription) solved the recording problem but they don’t connect the text to the structured data. Kees-Jan’s call transcript lives in Granola, but his customer record lives in Dynamics and his project history sits inside Sharepoint. None of them know about the others.

The architectural approach is to make the transcript a first-class citizen in the graph. Extract the entities (Kees-Jan, the heating problem, the crop-switch reasoning) and connect them to the nodes that already exist. Add the bi-temporal edge: when Kees-Jan stated this, and when the company logged that he stated it. Now the multi-hop query from earlier gets a fourth hop: heating issue → crop switch → market reasoning → Kees-Jan’s own words on the call. The agent doesn’t just spot the pattern across five clients on the same crop trajectory; it can quote the customer back to the account manager preparing the renewal.

It’s also the answer to the pension question. The engineer can’t extract thirty years of Luc’s judgement retroactively. She can pipe every meeting he attends through the same extraction pipeline for the eighteen months before he retires. A useful share of what he knows ends up landing in the graph that way.

What’s in the context graph stack?

The greenhouse case touched four of the six layers an agent system needs. Most arguments about context graphs are different arguments stacked on top of each other, with the participants disagreeing about different layers without noticing. Let’s unpack them.

The data layer.

Most companies already have data layers: relational databases for structured records, document stores for unstructured content, search indexes for full-text retrieval. AI adds two new shapes. Vector retrieval finds things by similarity (embed the query, find chunks that mean roughly the same thing). Graph retrieval finds things by explicit connection (walk the typed relationships between entities). Memgraph and Neo4j are the household names competing to be the go to graph store. Vectors find the haystack; graphs find the path through it. Most production systems end up running both.

The capture problem. A lot of organizational knowledge lives in people’s heads. The state clock (who is the CFO, which plan is Kees-Jan on) is mostly captured by your existing systems. The event clock (why we awarded that off-list discount, why the NPS survey and the support tickets tell a different story) is mostly missing. Glean tries to surface relationships by indexing everything across your internal tools. Palantir embeds engineers who do the structuring by hand, but they only operate on government-sized organisations. Microsoft’s GraphRAG extracts knowledge graphs from documents automatically. These are different bets on how much human discipline is required.

The wiring. Agents need to call tools, read databases, and hand off to other agents. MCP (Anthropic’s Model Context Protocol) is the wiring standard for tools. A2A (Google’s Agent-to-Agent) is the wiring standard for agent-to-agent calls. Both are now Linux Foundation projects. Low-code automation platforms like n8n sit one layer up, connecting hundreds of services without code and adding AI reasoning at specific steps. That’s where most companies without a platform team start. MCP usage itself is already shifting toward Anthropic’s Code Mode pattern, where tool definitions live as code the agent imports rather than as context it loads upfront, dropping token costs by an order of magnitude.

The cockpit. Someone has to give the agent instructions and read the result. Engineering teams gravitate to code-first environments like Cursor or Claude Code. Some knowledge workers are turning to the terminal to get the most out of their harness; others are limiting themselves to Claude Cowork. The jury is still out on what AI UX will look like. Tan’s punchline on this layer: the skills are the prompts. The work has shifted from prompt-writing to skill-writing.

Memory. A chatbot forgets you when you close the tab. An agent that handles your customer accounts has to remember that Kees-Jan at Groentenenfruit.nl prefers Dutch over English, that the contract was renewed with a discount, that the billing dispute from January was resolved. Mem0 extracts and reconciles facts as conversations happen. Graphiti (Zep) keeps the full history with four timestamps per relationship. Letta (formerly MemGPT) lets the agent manage its own memory the way an operating system manages RAM. These are different bets on what “remembering” actually means.

The harness. The harness is the software wrapped around the model: what context loads, which tools are available, what gets compressed, what gets saved between sessions. Claude Code, Cursor, and OpenClaw are harnesses. LangGraph and CrewAI are orchestration frameworks that coordinate multiple agents. Memory and harness are not separable design decisions. The harness is what controls how memory enters and leaves the agent’s context, and which APIs that data passes through on the way.

Why this is hard

Building any of this is hard on different levels.

The social problem is well-known by now. The horticulture company has people who have been doing their jobs for twenty years. Some of them are excited about AI. A larger group believes that AI threatens the parts of their work they were proudest of, and they aren’t coming to workshops in good faith. A smaller group is already using their personal ChatGPT in the evenings and won’t tell anyone. The CEO has to introduce tooling that visibly redistributes work, and probably people, while the company is trying to grow. None of this is a context-graph problem, but it determines whether the context graph gets built.

The technical problem is the inheritance tax of the existing stack. The ERP runs on a Postgres version that hasn’t been upgraded in years. Dynamics is on the cloud version, but the parallel Excel pipeline in sales operations carries half the deals nobody else can see. The project archive is fifteen years of unstructured Word and PDF files, named inconsistently. There is no data governance function, deployments still route through the IT manager, and infrastructure as code is on next year’s roadmap. Building a production context graph against this substrate means doing the data-quality work that nobody has done for fifteen years, and doing it while the agent is already running in production.

Then there is a deeper, sociotechnical problem. Organizations don’t reliably know what they do, experts pattern-match in ways they can’t articulate, and most of the decisions that get filed in your CRM are post-hoc stories the company tells itself about events that happened. Capturing those stories at scale gives you a high-fidelity record of organizational fiction.

Underneath all three sits a bootstrapping problem. The companies that need a context graph most are the ones running on tribal knowledge, and tribal knowledge is exactly what can’t be queried. Discipline isn’t a byproduct of capture. It’s the other way round.

The prize is bigger this time

Despite the fog of war, one thing is certain: some companies will crack this faster than others. We saw it with agile software development, and again with DevOps. The early adopters built moats while the laggards held meetings. History will repeat.

The size of the prize is what’s different this time. Foundation Capital frames context graphs as a trillion-dollar opportunity, and the order of magnitude is probably right. Agile laggards got slower software cycles, DevOps laggards got worse uptime, and AI laggards risk being out-competed by companies with five times their operational leverage. Companies that build a working context graph end up in a different category from their peers. The historical analogy here is closer to electrification than to agile.

One last strategic point. Switching your agent’s model is straightforward; the APIs converge and the capabilities are comparable. Switching your agent’s memory layer is not. Proprietary formats coupled to vendor harnesses, accumulated organizational context that doesn’t export. Most other infrastructure is scaffolding over current model limits and will be absorbed; memory won’t be. Whoever controls your memory layer controls the lock-in.

This matters at the procurement table. Any vendor whose roadmap doesn’t articulate how their system enables agentic use cases is selling you the past. The ERP, the CRM, the ticketing system: every renewal in the next two years is a chance to filter for vendors who can answer the question and replace the ones who can’t. Garry Tan‘s version: the future belongs to people and companies that build compounding AI systems on infrastructure they own. Build the structural parts on systems you control.

In Part 3, I’ll argue that the discipline these systems require comes from AI adoption itself. Teaching an agent how your business works is the same act as making the business legible to itself for the first time. The context graph and the operating model end up being the same artifact, viewed from two angles.